Data annotation plays a crucial role in the development of artificial intelligence (AI) and machine learning (ML) models. Accurate annotations are the foundation for training algorithms that power everything from self-driving cars to voice recognition systems. Nonetheless, the process of data annotation shouldn’t be without its challenges. From sustaining consistency to ensuring scalability, businesses face a number of hurdles that can impact the effectiveness of their ML initiatives. Understanding these challenges—and the right way to overcome them—is essential for any group looking to implement high-quality AI solutions.

1. Inconsistency in Annotations

One of the frequent problems in data annotation is inconsistency. Completely different annotators may interpret data in various ways, particularly in subjective tasks such as sentiment evaluation or image labeling. This inconsistency can lead to noisy datasets that reduce the accuracy of machine learning models.

How you can overcome it:

Establish clear annotation guidelines and provide training for annotators. Use common quality checks, including inter-annotator agreement (IAA) metrics, to measure consistency. Implementing a assessment system the place skilled reviewers validate or correct annotations also improves uniformity.

2. High Costs and Time Consumption

Manual data annotation is a labor-intensive process that demands significant time and financial resources. Labeling giant volumes of data—particularly for advanced tasks reminiscent of video annotation or medical image segmentation—can quickly grow to be expensive.

How to overcome it:

Leverage semi-automated tools that use machine learning to assist within the annotation process. Active learning and model-in-the-loop approaches enable annotators to focus only on the most unsure or complex data points, increasing efficiency and reducing costs.

3. Scalability Issues

As projects grow, the quantity of data needing annotation can become unmanageable. Scaling up without sacrificing quality is a critical challenge, particularly when dealing with diverse data types or multilingual content.

Tips on how to overcome it:

Use a strong annotation platform that helps automation, collaboration, and workload distribution. Cloud-based mostly solutions enable teams to work across geographies, while integrated project management tools can streamline operations. Outsourcing to specialized data annotation service providers is one other option to handle scale.

4. Data Privateness and Security Considerations

Annotating sensitive data similar to medical records, monetary documents, or personal information introduces security risks. Improper dealing with of such data can lead to compliance points and data breaches.

The right way to overcome it:

Implement strict data governance protocols and work with annotation platforms that supply end-to-end encryption and access controls. Ensure compliance with data protection rules like GDPR or HIPAA. For high-risk projects, consider on-premise options or anonymizing data earlier than annotation.

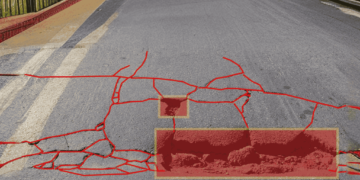

5. Advanced and Ambiguous Data

Some data types are inherently troublesome to annotate. Examples embody satellite imagery, medical diagnostics, or texts with nuanced language. This advancedity increases the risk of errors and inconsistent labeling.

Tips on how to overcome it:

Employ topic matter experts (SMEs) for annotation tasks requiring domain-specific knowledge. Use hierarchical labeling systems that enable annotators to break down complicated decisions into smaller, more manageable steps. AI-assisted solutions also can help reduce ambiguity in advanced datasets.

6. Annotator Fatigue and Human Error

Repetitive annotation tasks can lead to fatigue, reducing focus and increasing the likelihood of mistakes. This is particularly problematic in massive projects requiring extended manual effort.

Learn how to overcome it:

Rotate tasks amongst annotators, introduce breaks, and monitor performance over time to detect fatigue. Gamification and incentive systems will help maintain motivation. Incorporating quality assurance workflows ensures errors are caught early and corrected efficiently.

7. Changing Requirements and Evolving Datasets

As AI models develop, the criteria for annotation could shift. New labels could be needed, or current annotations would possibly become outdated, requiring re-annotation of datasets.

The right way to overcome it:

Build flexibility into your annotation pipeline. Use model-controlled datasets and keep a feedback loop between data scientists and annotation teams. Agile methodologies and modular data constructions make it simpler to adapt to altering requirements.

Data annotation is a cornerstone of efficient AI model training, but it comes with significant operational and strategic challenges. By adopting finest practices, leveraging the suitable tools, and fostering collaboration between teams, organizations can overcome these obstacles and unlock the full potential of their data.

If you cherished this write-up and you would like to acquire much more facts pertaining to Data Annotation Platform kindly take a look at our own page.